记录下使用 onnx 提高向量生成速度的过程。复现放在:amulil/vector_by_onnxmodel: accelerate generating vector by using onnx model (github.com)。

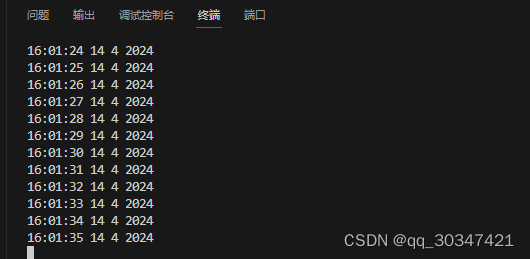

结果

OnnxModel Runtime gpu Inference time = 4.52 ms

Sentence Transformer gpu Inference time = 22.19 ms参考

GitHub - yuanzhoulvpi2017/quick_sentence_transformers: sentence-transformers to onnx 让sbert模型推理效率更快